The Department for Transport's £2m local roads health check, which used artificial intelligence to analyse video surveys, has been completed, with results showing broad similarities between the regions.

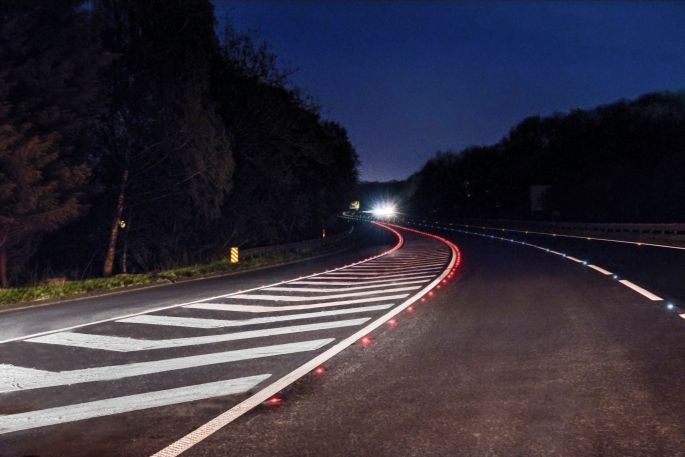

There were three main strands to the survey: road condition, footway condition and road markings conditions, which was announced last year to much fanfare as it promised artificial intelligence analysis of road condition through data specialists Gaist and with support from the Local Council Roads Innovation Group.

While the full results are yet to be released publicly an exclusive briefing to the Road Safety Markings Association from Gaist outlined some basic findings.

Dr Stephen Remde, director of innovation and research at Gaist, told conference delegates that there were ‘no major divisions between regions, no north-south divide,' when it came to road markings.

Although some regions did appear to have much larger numbers of unmarked roads, for instance, this mainly related to the size of the rural or unclassified network.

‘The results were pretty uniform throughout the country. The prevalence of lines depends on the class of the road itself. Practically all A and B roads have some visible road markings. C roads have much less,' Dr Remde said.

‘We can see the condition of the road markings decreases down the hierarchy of roads. A roads are generally better than B, and in turn better than C, which makes sense.'

He added that a lot of value had been created from the data, which is currently being analysed by the DfT.

‘We were able to cost-effectively extract valuable information. Where we work with local authorities we can do this on all their roads – to help target maintenance and work closely with contractors to target problematic areas.'

Over the last nine years, Gaist has been using video as its main collection method for roads data.

It has an image bank for every single metre of the A, B and C network in England. All major cities in Scotland and about a third of the unclassified roads in England as well.

For the national health check it used:

- About 21% of rural networks

- 22% of semi-urban

- 53% or urban networks

- 36% of city networks

Most of the data was from its existing data bank, which uses human inspections and analysis rather than AI. It also collected some additional imagery where the percentages were slightly too low. It then extrapolated the data to create a national picture.

However, Dr Remde said: ‘A very detailed survey of white lining is not something we could replicate nationally. Our inspectors look through each image and annotate them accordingly. Then we used an artificial intelligence approach called deep learning.

‘We created the training data to teach our AI model how to do our specific task [recognise road markings, lines and symbols]. Our inspectors do a process known as annotation where they look at images and mark them up. They did this on 15,000 different images for the training data.'

These images and annotations are then fed into the AI model, which is trained to recognise road markings. Through ‘inference', the AI model then provides condition data analysis itself.

Through the process, Gaist found that ‘the ground truth' from inspectors was often ‘not as good as the image created by our deep learning algorithm'.